Something happened in Virginia in July 2024 that most of us missed at the time, because it looked like a routine grid incident. A lightning arrester failed. Standard stuff. Except that what followed was not standard at all: data centers yanked over a gigawatt of power off the grid in an instant, pivoting to backup generators to protect their cooling systems. A gigawatt is the electricity that roughly 750,000 homes use at once. Gone, instantly, because some servers got nervous about a voltage fluctuation.

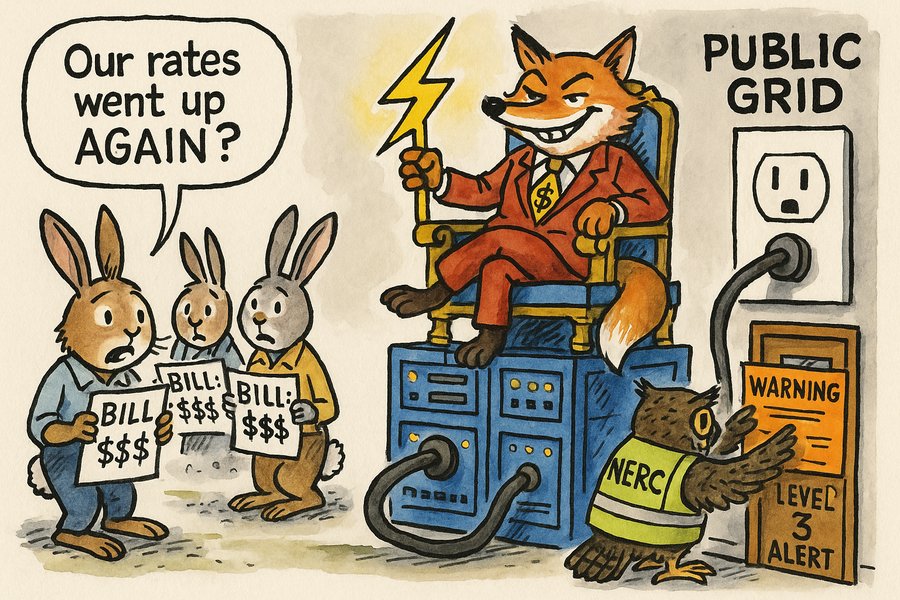

The grid held, that time. But the North American Electric Reliability Corp. has spent the eighteen months since watching similar incidents accumulate. This week it issued a Level 3 alert, the most severe warning in its playbook, and only the third such alert in its entire history. The cause: AI data centers are behaving in ways the grid was never designed to accommodate, and the regulator is serving notice that something needs to change.

I want to talk about what "something needs to change" means. And specifically, who is being asked to change it.

The data centers involved are owned by some of the most valuable companies in human history. Amazon, Microsoft, Google, Meta, and a handful of others collectively spent over $400 billion on infrastructure last year alone. That figure is larger than the combined annual capital expenditure of the entire global oil and gas production industry. Not some of it. All of it. Five technology companies, outspending every oil major, every state petroleum company, every pipeline operator on the planet, combined.

Those same companies do not pay for the transmission infrastructure that delivers their power. They do not pay for the grid upgrades required to handle their load growth. They are not, under current rules, even required to tell grid operators when they are coming online or going offline. They are the largest, most demanding electricity customers in history, and they have operated, until this week's alert, in a regulatory environment that treated them like ordinary commercial tenants rather than the utility-scale infrastructure they have become.

The costs do not vanish. They get distributed. When a data center triggers the need for a new substation or a transmission upgrade, the cost is typically socialised across all ratepayers in the region. When AI clusters grow in a community, housing prices rise, water usage climbs, and local grids are stressed in ways that require expensive upgrades funded by everyone who pays an electricity bill. The industry captures the productivity gains. The community absorbs the infrastructure burden.

This is not a new pattern. It is, in fact, the defining pattern of the AI economy so far. The machine's costs are socialised; its benefits are privatised. The training runs consume vast electricity drawn from grids the public built and maintains. The inference queries ride over fibre networks that public investment subsidised. The large language models were trained on intellectual property that humans created over generations, without compensation to those creators. And the labour that AI is beginning to displace generates no severance payments, no transition support, and no social contract from the companies that replaced it.

I am not arguing that the AI industry is uniquely villainous. Every major industrial revolution has followed this pattern. Railway companies took the land. Steel mills fouled the rivers. Automobile manufacturers made the public pay for the roads. The difference here is scale and speed: the AI industry's externalisation is happening faster than any regulatory framework has managed to absorb. The grid is the first place the accounting is becoming publicly visible, because electricity bills are something people actually read.

The residents of Loudoun County, Virginia, live in the data centre capital of the world. They are also dealing with some of the most rapidly rising electricity bills in the United States. The correlation is not coincidence. The data center operators whose servers run twenty-four hours a day in their county are not paying those bills. Their neighbours are.

The NERC alert is a small but meaningful corrective. It is saying, in the language of regulatory notices: you cannot keep building at this pace while treating the grid like a commons you bear no responsibility for. The recommendations, that data centers report their power swings, participate in grid stability planning, and submit to fault recording, are asking the industry to stop being a free rider on public infrastructure. NERC plans to write binding standards by year's end, pending approval from the Federal Energy Regulatory Commission.

The AI industry will respond, with some genuine justification, that tech companies are also major buyers of renewable energy and nuclear power purchase agreements. Some of that is true, and worth crediting. But it does not resolve the core accounting problem: the timing mismatches, the load volatility, the geographic concentration, and the sheer speed of scaling are imposing real costs on everyone else, right now, regardless of what will eventually be connected to the grid in 2030.

What worries me is the familiar trajectory of regulatory capture. The Data Center Coalition has already put out a statement asking that any standards "do not single out one industry." That framing sounds reasonable. It is actually asking regulators to pretend that a sector consuming potentially 20% of U.S. electricity by 2030 is just another electricity customer, indistinguishable from a shopping mall or a factory. That is not neutrality. That is externalisation dressed up as equity.

The question underneath all of this is not a technical one. It is a political one: who decides where AI's costs land? The market, left alone, has already decided: costs land on the public, benefits flow to shareholders. That is not a law of nature. It is a policy choice that can be changed, if enough people notice the electricity bill and start asking why it keeps going up.

Whether policymakers have the institutional will and speed to act before the costs become entrenched is, honestly, uncertain. Regulatory frameworks move at the speed that affected communities can organise. The AI industry moves at the speed of venture capital. That gap is, at the moment, very large. The NERC alert at least makes the problem official. What happens next depends on whether "official" is enough to generate action, or whether it joins the long archive of warnings that were noted, acknowledged, and quietly filed away.