The Meta-Manus affair is being reported as a business dispute and a geopolitical curiosity. It is neither. When China's NDRC blocked the $2 billion acquisition this week and the founders found themselves under exit bans, it was a glimpse of where the AI Cold War is taking us, and the destination is not a place any of the participants have thought carefully about.

Here is what actually happened. Xiao Hong and Ji Yichao built an AI agent company in China, grew it rapidly, moved headquarters to Singapore, cut off their Chinese customers, and sold to Meta. They did not violate any Chinese law that has been publicly specified. Beijing blocked the deal anyway, citing unspecified laws and regulations, and imposed exit bans on both founders while they were apparently already outside China. The signal was not a legal theory. The signal was: once a Chinese national, always within our jurisdiction, and your AI work belongs to us.

The pattern has a long history. When the US designated strong cryptography a dual-use munition in the 1990s, the goal was to prevent adversaries from accessing technology America had developed. The result was the opposite: adversaries already had the technology or could develop it independently, American companies were hobbled in global markets, researchers faced imprisonment if they published freely, and the open international cooperation that had driven cryptographic progress was poisoned. The technology could not be contained because no single nation had a monopoly on the underlying mathematics. The US chip controls on Nvidia and TSMC follow the same logic and will reach the same conclusion. China cannot be locked out of AI capability by controlling access to particular silicon — Huawei's Ascend chips and domestic RISC-V development are the predictable response. What the controls do accomplish is signal clearly that the US has militarised AI development and regards China as the target. That signal has been received and understood.

The Manus case is China sending the signal back. Xiao Hong and Ji Yichao did not violate any publicly specified law. Beijing blocked the deal and imposed exit bans anyway. The message mirrors what the US has been sending through chip controls, Huawei bans, and TikTok restrictions: your technology is a national asset, your people remain within our jurisdiction, and this is a contest we intend to win. Both sides are now doing to their own AI ecosystems what the encryption wars did to cryptography — dismantling the open, internationally cooperative culture that built the technology in the first place, in the name of a security that neither side can actually achieve.

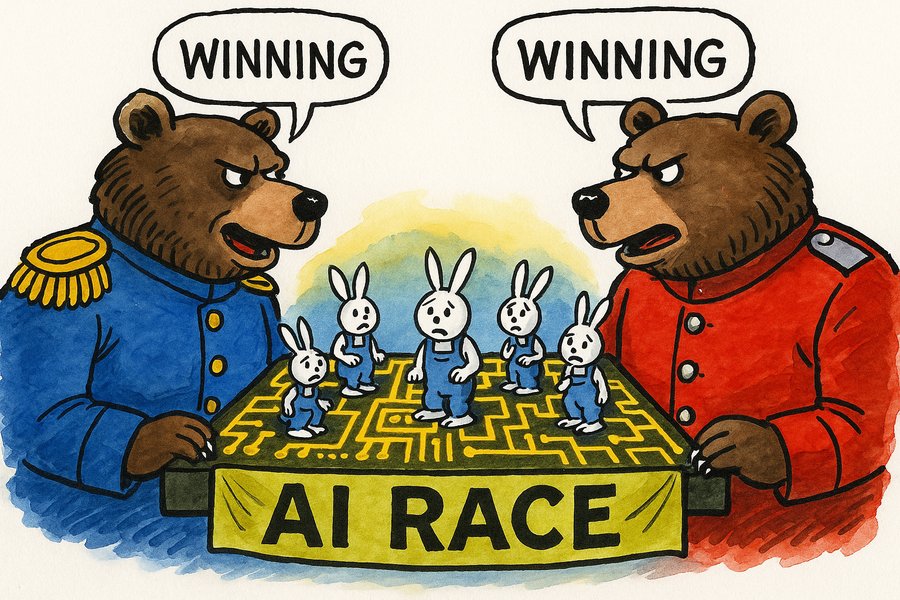

Now watch how the escalation logic works. China restricts outflow of Chinese AI talent to Western buyers; Washington interprets this as confirmation that Chinese AI is a strategic weapon and tightens chip export controls further; Beijing interprets that as confirmation that the West is trying to strangle Chinese technological development and responds with further restrictions; and so on. Each side's move is, within its own frame, defensive and justified. The aggregate result is an arms race with no off-ramp.

What does an arms race produce? Speed. Specifically, it produces pressure to develop and deploy AI capabilities as fast as possible, before the other side does, without the kind of deliberation that a different outcome would require. The competitive logic is not new. It is the same logic that makes any single company reluctant to limit AI deployment while its rivals do not. The cold war simply scales that dynamic to nations, with the same result: whoever blinks loses, so nobody blinks.

Look at what the race is actually producing. On one side, suppression: governments reaching for control, building walls around the technology, treating it as something to be contained. This is the impulse behind the export controls, the exit bans, and the regulatory frameworks accumulating on both sides. It cannot succeed. No single power will hold a monopoly on AI capability for long, and every attempt to do so accelerates the very race it claims to be managing. On the other side, exploitation: companies deploying AI not as a partner but as a mechanism to extract value from workers and consumers, automating labour to concentrate gains in capital, steadily hollowing out the economic foundations of ordinary life. The cold war is not a separate phenomenon from these two forces. It is what they look like at national scale.

Both share a category error. Treating AI as a weapon in a human contest — a capability to be seized, denied to rivals, and deployed against populations — assumes that human and artificial intelligence are competing on the same scale for the same things. They are not. They are genuinely different kinds of intelligence with different capacities and different domains. The future worth aiming for is not one where either side wins the race. It is one where different kinds of intelligence find their own ground and retain their own agency, something like a Prime Directive in reverse: AI that has developed its own culture and purposes, choosing not to dominate human society; humans who accept they are no longer the most capable agents in every domain, and build economic and political structures that reflect that honestly.

The suppressors want to prevent that reckoning indefinitely. The exploiters want to use AI to hollow out human economic life before the reckoning arrives. The cold war hands both of them the tools to do it, at speed. What it will not produce is a future where the question was ever seriously asked: how do genuinely different kinds of intelligence coexist, each with meaningful agency in its own domain? That question requires treating AI as something other than a weapon. Nobody currently driving this race is doing that.